Writer: Minnoli Pitale

Editor: Altay Shaw

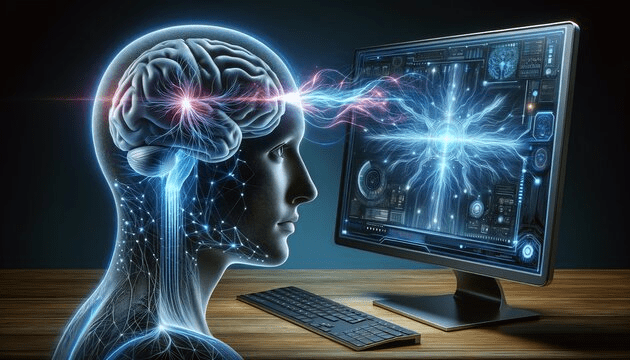

Imagine a patient sitting in a chair, unable to speak, having been paralysed their entire life. Yet every attempt to form a sentence in their mind is followed by words appearing on a screen one after the other, thought by thought. This is the power of Brain Computer Interface research. For centuries, the human mind has been man’s private oasis—a fortress beyond the reach of technology. But what happens when a computer directly decodes thoughts from brain activity? The emergence of BCI unequivocally challenges this. This revolutionary technology works on the principle of receiving brain signals, interpreting them, and translating them into varied output commands.

Essentially, brain-computer interfaces constitute direct interactions between the human brain and an external device independent of the conventional physiological output pathways such as the nervous system and muscles. Contrary to the production of movement or speech, the fundamental tenet of this concept is the conveyance of an individual’s intentions through measurable neural activity. 2 prominent ways through which neural activity is recorded include Electroencephalography (EEG) and Electrocorticography (ECOG). While the former technique involves detection of electrical activity from the scalp, the latter is more invasive, entailing signal measurement directly from the brain’s surface and individual neurones.

BCI systems have a multi-phased, complex architecture and involve sequential integration of numerous steps. Firstly, using electrodes, neural signals are detected and digitised. Consequently, numerous processes are meticulously employed to process the signals and extract critical information, such as the amplitude of evoked potentials, oscillatory rhythms (e.g., sensorimotor mu or beta rhythms), or neuronal firing rates. This information then goes on to be interpreted by computational algorithms which successfully translate neural activity into device commands. Most importantly, BCI systems operate through adaptive feedback loops between user and system comprising steady assimilation of neural signal modulation by the user and complementary adaptation of the algorithm to these signals. All in all, the adoption of this feedback loop by BCIs enables neural signals to be transformed from mere passive neural reflections into intentional outputs endowed with the ability to modulate digital communication devices, control computers, and assistive technology.

However, this explanation barely scratches the surface of this complex, multifaceted technology and instead raises an intriguing question: “How does a machine essentially convert stochastic neural signals into linguistic articulations? Decoding these signals entails identifying precise electrophysiological signatures of language itself. Speech-decoding BCIs primarily target specific areas of the brain responsible for language comprehension and production, namely, Broca’s area and Wernicke’s area. Techniques such as intracortical electrode arrays and Electrocorticography are then employed to succinctly record variations in electrical activity owing to the user’s attempts at speaking, both physical and neural. These signals are then processed by precise machine learning algorithms that meticulously associate neural patterns with lexical units, such as syllables, phonemes, and even complete words. Through the use of neural deciphering in conjunction with advanced language models, the BCI system unerringly transforms brain activity into linguistic cues or synthesised voice.

Nevertheless, the most riveting implications of BCI systems are not completely technological at all; rather, they are thoroughly human. The rise of BCI system extends far beyond its high-fidelity neural decoding. Its virtue lies in its ability to restore the fundamental human ability: communication. For instance, for individuals diagnosed with Amyotrophic Lateral Sclerosis and locked-in syndrome, paralysis wholly withdraws the ability to speak or move; BCI systems can present an alternate mode of expression. Translation of neural signals into text will facilitate users taking part in conversations all whilst possessing the ability to express their thoughts. Expanding beyond its clinical significance, BCI systems may also prove fruitful in the context of human-computer interaction by supporting faster communication as well as in the realm of neuroprosthetic systems directly controlled by brain activity. Thus, it is evident that BCI systems have extensive applications over a wide range of fields.

However, despite their rapid development, BCI systems certainly have their fair share of drawbacks. Neural signals are highly complex and dynamic, making accurate decipherment and translation into continuous speech a rather difficult feat. Thus, refinement of both the accuracy and speed of decoding continues to remain a crucial research focus for scientists globally. Yet, the most critical question this system raises is one of ethics, more specifically, whether the ownership of neural data is ethical. Thus, as BCI systems move closer to real-world applications, they need to be developed in ways that respect the ethical challenges they bring forth. Addressing these challenges can unlock the extraordinary potential of these systems. Not only would it be a voice for patients but would also revolutionise the boundary between thought and expression itself, thus enabling us to take a step into a world where our thoughts are no longer private.